What is a voltmeter? How does it work? How does it measure the unit of volts, what is the working principle? Information about the history of voltmeter and voltmeter types.

A voltmeter is an instrument used to measure the electrical potential difference between two points in an electrical circuit. It is typically connected in parallel with the component or circuit being measured, and it measures the voltage across that component or circuit. The unit of measurement for voltage is volts (V), and voltmeters are calibrated in volts to provide accurate readings. Voltmeters can be analog or digital, and they come in various types and ranges to accommodate different levels of voltage. They are commonly used in electronic testing, electrical engineering, and in many other industries where electrical measurement is needed.

History of Voltmeter

The history of the voltmeter dates back to the late 1800s, when electrical experimentation was booming. The first known voltmeter was developed by Edward Weston, an American inventor, in 1888. Weston’s voltmeter was a type of moving-coil instrument that used a permanent magnet to create a magnetic field. The coil of wire in the instrument would move in response to the voltage being measured, causing a needle to move across a scale and display the voltage reading.

Another early type of voltmeter was the electrostatic voltmeter, which used the repulsion between two charged plates to measure voltage. This type of voltmeter was developed by Lord Kelvin in the 1860s and was very accurate, but it was also very delicate and expensive.

Over the years, the technology used in voltmeters has evolved, with the introduction of digital readouts, solid-state components, and other innovations. Today, voltmeters come in a wide variety of styles and ranges, from simple analog instruments to complex digital meters that can measure a wide range of electrical parameters.

Overall, the history of the voltmeter is closely tied to the history of electrical science and technology, and the development of these instruments has played a critical role in advancing our understanding of electricity and its practical applications.

What is Voltmeter Working Principle?

A voltmeter works on the principle of measuring the voltage difference or potential difference between two points in an electrical circuit. The voltage difference is the measure of the electrical energy that is being transferred between the two points.

The working principle of a voltmeter is based on the fact that when an electric potential difference is applied across two points in a circuit, a current flows through it. The voltmeter is connected in parallel with the circuit, which means that it is connected across the two points where the voltage is to be measured.

When the voltage is applied across the circuit, a small current flows through the voltmeter. The amount of current that flows through the voltmeter is proportional to the voltage difference between the two points in the circuit. The voltmeter then uses a calibrated scale to display the voltage difference in volts (V).

The internal workings of a voltmeter can vary depending on the type of instrument. For example, analog voltmeters typically use a moving-coil mechanism, while digital voltmeters use digital circuits to measure and display the voltage. However, the basic working principle of measuring the voltage difference between two points in a circuit remains the same.

Analog voltmeter

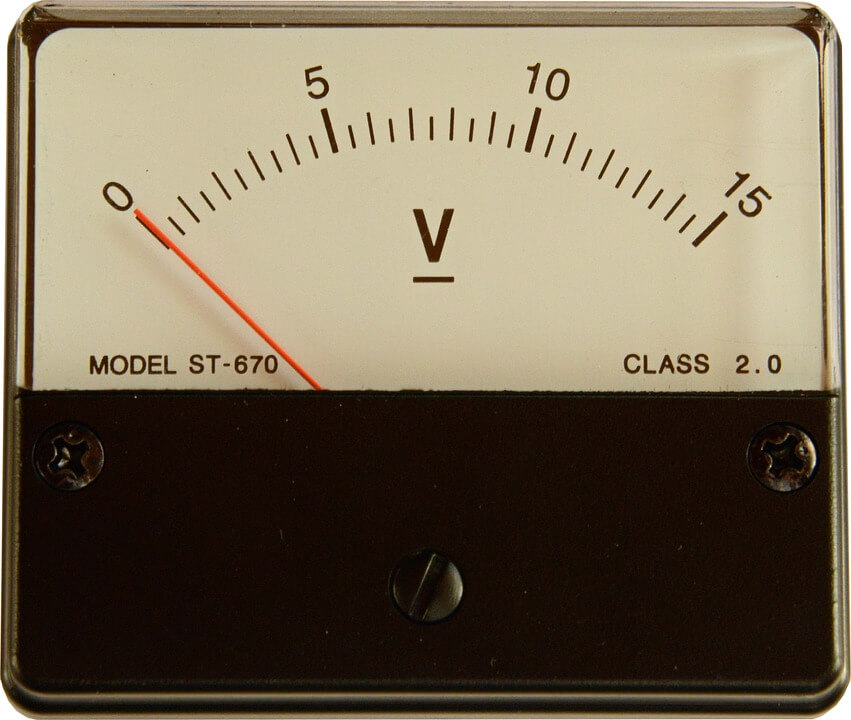

An analog voltmeter is a type of voltmeter that uses a moving-coil mechanism to measure and display voltage. It is also sometimes called an analog meter or an analog multimeter.

The basic construction of an analog voltmeter consists of a coil of wire that is suspended within a magnetic field. When a voltage is applied to the coil, it causes the coil to rotate within the magnetic field, which in turn causes a pointer to move across a scale. The scale is calibrated in volts (V) or millivolts (mV) to provide an accurate reading of the voltage being measured.

Analog voltmeters are generally designed to measure DC voltage, although some models can also measure AC voltage. They are often used in electronic testing and troubleshooting, as well as in other applications where electrical measurement is needed.

One advantage of analog voltmeters is that they can provide a visual indication of the rate of change of the voltage being measured. This can be useful for detecting fluctuations or transients in the voltage, which may not be immediately apparent from a digital readout.

However, one disadvantage of analog voltmeters is that they can be less accurate than digital voltmeters, especially at low voltages or in noisy electrical environments. They can also be more difficult to read than digital meters, especially for individuals with visual impairments or color blindness.

Amplified voltmeter

An amplified voltmeter, also known as an electronic voltmeter, is a type of voltmeter that uses an amplifier to amplify the voltage being measured. It is a more modern and advanced version of the analog voltmeter.

In an amplified voltmeter, the voltage being measured is first passed through an amplifier circuit, which amplifies the voltage to a level that can be easily measured and displayed on a digital readout. The digital readout typically displays the voltage in volts (V) or millivolts (mV).

Amplified voltmeters can measure both DC and AC voltage and are generally more accurate than analog voltmeters. They are also more versatile and can be used to measure a wider range of voltages and electrical parameters, such as resistance and current.

One advantage of amplified voltmeters is that they are generally more sensitive than analog voltmeters, which means that they can measure smaller changes in voltage. They are also faster and more responsive, making them better suited for measuring rapidly changing voltages or voltage transients.

Another advantage of amplified voltmeters is that they are more compact and portable than analog voltmeters, which can be useful for field measurements or in situations where space is limited.

Overall, amplified voltmeters are a popular choice for electronic testing and measurement, and they are widely used in a variety of industries, including electronics, telecommunications, and power generation and distribution.

Digital voltmeter

A digital voltmeter (DVM) is a type of voltmeter that uses digital circuits to measure and display voltage. It is a modern and advanced version of the analog voltmeter and is widely used in electronic testing and measurement.

In a digital voltmeter, the voltage being measured is first converted into a digital signal using an analog-to-digital converter (ADC) circuit. The digital signal is then processed by a microprocessor, which displays the voltage reading on a digital display.

Digital voltmeters can measure both DC and AC voltage and are generally more accurate than analog voltmeters. They are also more versatile and can be used to measure a wider range of voltages and electrical parameters, such as resistance and current.

One advantage of digital voltmeters is that they are very easy to read and provide a precise digital readout of the voltage being measured. They are also very fast and responsive, making them well-suited for measuring rapidly changing voltages or voltage transients.

Another advantage of digital voltmeters is that they are often equipped with additional features and functions, such as data logging, automatic ranging, and computer interface capabilities. This makes them more flexible and useful for a wide range of electronic testing and measurement applications.

Overall, digital voltmeters are a popular choice for electronic testing and measurement, and they are widely used in a variety of industries, including electronics, telecommunications, and power generation and distribution.

Pulsar