Why is entropy so difficult to understand from a scientific standpoint? What is the problem of entropy? Does anyone understand entropy?

Entropy is a concept that can be difficult to understand because it has multiple definitions and interpretations in different scientific fields. In thermodynamics, entropy is a measure of the disorder or randomness of a system. However, in information theory, entropy refers to the amount of uncertainty or randomness in a set of data.

Moreover, the concept of entropy is often associated with complex systems, which can be difficult to understand and model. For example, in statistical mechanics, entropy is related to the behavior of large numbers of particles and their interactions, which can be difficult to predict and analyze.

Additionally, the second law of thermodynamics, which states that the entropy of a closed system always increases over time, can seem counterintuitive and challenging to reconcile with our everyday experiences. It can be difficult to grasp how a system can become more disordered over time without any external input of energy or matter.

Overall, the difficulty in understanding entropy from a scientific standpoint stems from the multiple interpretations and applications of the concept, as well as the complexity of the systems to which it applies.

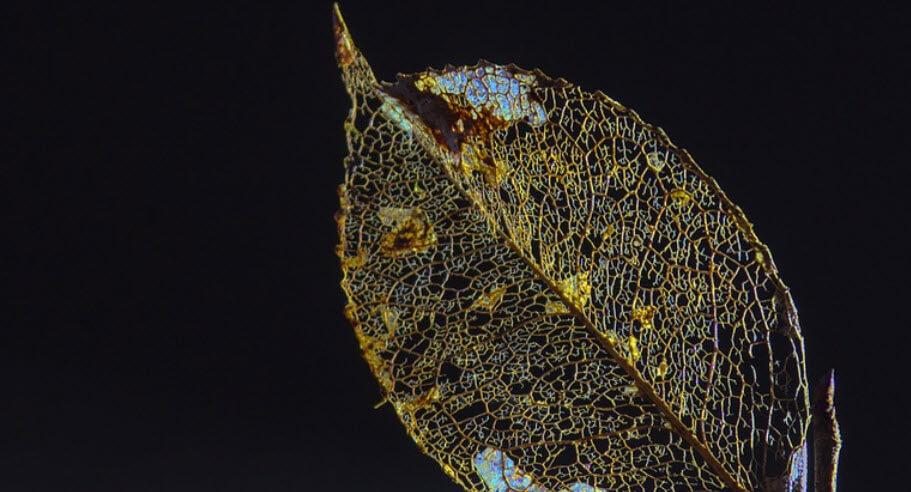

Source: pixabay.com

What is the problem of entropy?

The problem of entropy refers to the challenge of reconciling the second law of thermodynamics with other fundamental laws of physics, such as the laws of classical mechanics or quantum mechanics. The second law of thermodynamics states that the entropy of a closed system always increases over time, leading to the eventual “heat death” of the universe.

However, this law appears to contradict the laws of classical mechanics and quantum mechanics, which are time-reversible and suggest that the behavior of a system should be the same whether time is moving forwards or backwards.

This apparent contradiction is known as the “arrow of time” problem. It raises the question of why the universe appears to have a directionality in time, and why entropy seems to always increase rather than decrease.

Scientists have proposed various solutions to the problem of entropy, such as the idea that the universe started in a highly ordered state at the Big Bang and has been increasing in entropy ever since. Some theories also suggest that the arrow of time arises due to the initial conditions of the universe, or due to the influence of the cosmic microwave background radiation.

Overall, the problem of entropy remains an area of active research and debate in physics and cosmology.

Does anyone understand entropy?

Entropy is a concept that is well-understood by scientists and has been studied extensively in various scientific fields, including thermodynamics, statistical mechanics, and information theory. The concept of entropy has proven to be incredibly useful in understanding the behavior of complex systems, such as those found in physics, chemistry, and biology.

However, while scientists have a good understanding of the concept of entropy and its applications, there are still many open questions and debates surrounding the topic. For example, the problem of entropy, also known as the arrow of time problem, is a challenging issue that has yet to be fully resolved.

Furthermore, the concept of entropy can be difficult to grasp for those without a background in physics or mathematics. This is due to the abstract nature of the concept and the different interpretations and definitions it can have in different fields.

In summary, while entropy is a well-understood concept by scientists and has many applications, there are still open questions and challenges surrounding its study, and it can be difficult for non-experts to fully understand.